Fake news has been proved to be a big problem for Facebook in past months, mostly during the US elections. After being criticized by many folks in media and by the general public, Facebook has revealed a plan to deal with the problem.

We believe in giving people a voice and that we cannot become arbiters of truth ourselves, so we’re approaching this problem carefully. We’ve focused our efforts on the worst of the worst, on the clear hoaxes spread by spammers for their own gain, and on engaging both our community and third party organizations.

– Adam Mosseri, VP, News Feed

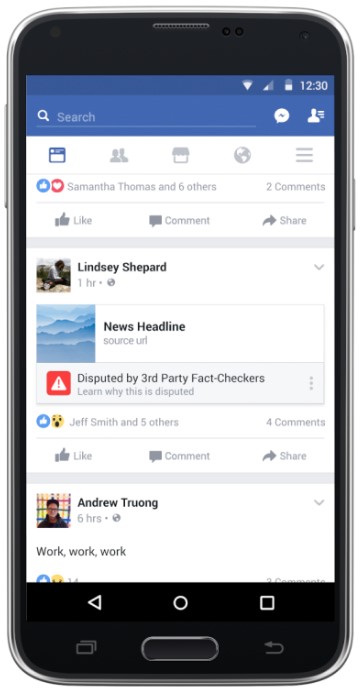

In its blog, the company announced today that the Social Network will now take help of third parties and users to identify and remove fake news from the site. First up; Facebook is testing some ways for its users report a news story should they think it is fake. Simply click on the upper right corner of the post, and you will see options to report the story.

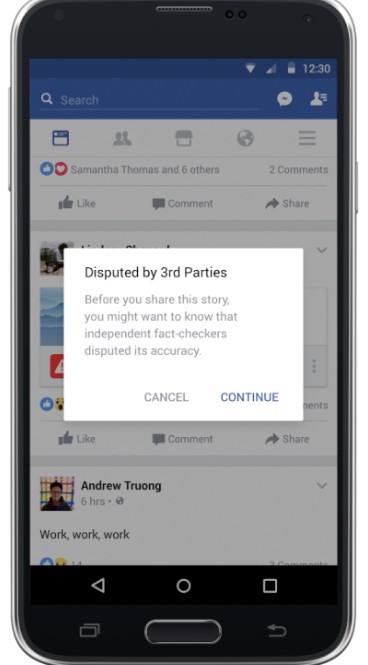

Apart from this, Facebook is now working with several third party fact checking services to detect and identify fake stories and hoaxes. Once a story has been flagged by the community as fake, the social networking giant will send it to fact-checking organizations, if found false, Facebook says that anyone clicking on the link will be warned and if possible, users will be provided with the correct news.

And finally, Facebook says that it is taking some other steps reduce financial incentives for fake news sites. Now spammers won’t be able to spoof domains. However, the company has not disclosed how.

It can’t be guaranteed that the above steps will be able to stop fake news completely, but it is good to know that Facebook has started to take actions.

READ NEXT: What is the difference between Chromecast and Chromecast Audio.